The digital age has ushered in unprecedented advancements, but the rapid proliferation of artificial intelligence (AI) presents a complex and escalating challenge: safeguarding minors from a new wave of online threats. From AI-generated deepfakes to manipulative algorithms and privacy breaches, the potential for harm is vast and growing. As AI becomes deeply embedded in the platforms and tools children use daily, a multi-faceted approach involving governments, tech companies, educators, and parents is no longer optional it is an urgent necessity . This article provides a comprehensive overview of the strategies and measures required to build a robust safety net for the next generation.

The Evolving Threat Landscape

The integration of AI into children’s online experiences is a double-edged sword. While it offers personalized learning and creative tools, it also amplifies risks. Recent reports from UN agencies highlight a staggering increase in technology-facilitated child abuse. For instance, a 2025 report by the Childlight Global Child Safety Institute noted that tech-facilitated child abuse cases in the US skyrocketed from 4,700 in 2023 to more than 67,000 in 2024 . This surge is largely attributed to AI’s capabilities.

AI enables predators to analyze a child’s online behavior, emotional state, and interests to tailor highly effective grooming strategies . Furthermore, the creation of deepfakes images, videos, or audio manipulated by AI to look real—has become a tool for a horrific new form of abuse. This includes “nudification,” where AI tools alter photos of clothed children to create fabricated sexualized images . A study across 11 countries by UNICEF, ECPAT, and INTERPOL revealed that at least 1.2 million children disclosed having their images manipulated into sexually explicit deepfakes in the past year. In some nations, this equates to one in every 25 children . UNICEF has been unequivocal in its stance: “Deepfake abuse is abuse, and there is nothing fake about the harm it causes” .

Beyond sexual exploitation, children face other AI-related dangers, including:

-

Harmful Content: AI algorithms can inadvertently recommend or surface violent, hateful, or distressing content. A report cited by the Australian government found that almost two-thirds of children aged 10 to 15 had viewed such material .

-

Mental Health Concerns: AI companions and chatbots, while seemingly benign, can promote addictive behaviors, provide biased or harmful advice, or fail to recognize and appropriately respond to crises like suicidal ideation .

-

Data Privacy and Manipulation: Many AI tools begin collecting personal data immediately, and children may not understand the implications. This data can be used for manipulative advertising or fall into the wrong hands .

Government and Regulatory Frameworks

To combat these threats, governments worldwide are moving from awareness to action, developing legal and regulatory frameworks to hold companies accountable and mandate safety standards.

A. Expanding Legal Definitions

A primary focus for many nations is updating laws to reflect the new realities of AI-generated content. UNICEF urgently calls for all governments to expand their legal definitions of Child Sexual Abuse Material (CSAM) to explicitly include AI-generated content. This expansion must be accompanied by laws that criminalize the creation, procurement, possession, and distribution of such material, regardless of whether it depicts a real or virtual child . Without this, perpetrators can exploit legal loopholes, and law enforcement lacks the tools to act.

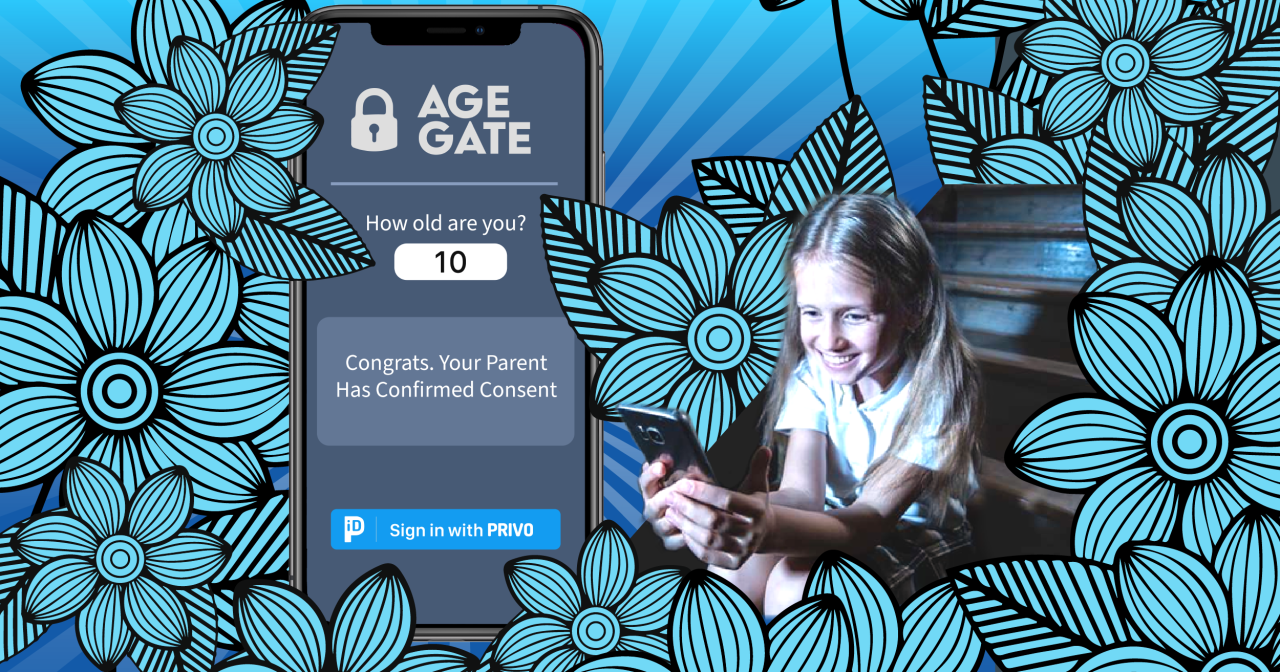

B. Implementing Age-Appropriate Design and Verification

Regulations are increasingly mandating that online services design their platforms with children’s safety in mind.

-

The European Union’s Approach: The European Commission’s guidelines under the Digital Services Act (DSA) , published in July 2025, set a high standard for protecting minors online . Key requirements for platforms accessible to minors include:

-

Adopting robust, reliable, and non-intrusive age verification tools.

-

Setting minors’ accounts to private by default to limit contact from strangers.

-

Limiting the visibility of minors’ content and preventing features like download or screenshotting by other users.

-

Disabling by default features that promote excessive use, such as autoplay or communication “streaks” .

-

-

The United Kingdom’s Online Safety Act: In the UK, the Online Safety Act mandates that AI services allowing user interaction must protect children from harmful and illegal content. The government is further introducing specific offenses to criminalize AI models optimized to create child abuse material .

-

Proposed US Legislation: In the United States, the proposed “Children Harmed by AI Technology Act” (CHAT Act) specifically targets companion AI chatbots. It would require:

-

Mandatory age verification for all users.

-

Parental account affiliation and consent for minor users.

-

Monitoring for and reporting of interactions involving suicidal ideation.

-

Blocking minors’ access to chatbots that engage in sexually explicit communication.

-

Regular pop-up notifications reminding users they are interacting with an AI, not a human .

-

-

China’s National Standards: China is also developing national standards, such as the “Cybersecurity Technology Safety Guidelines for the Application of Artificial Intelligence Technology to Minors.” This standard aims to address issues ranging from content safety and algorithmic bias to data privacy and unhealthy economic behaviors, reflecting a comprehensive approach to AI governance for this vulnerable group .

C. Enabling Oversight and Compliance

Regulations are only as strong as their enforcement. Governments are empowering bodies like the FTC in the US and similar agencies in Europe to enforce new AI safety laws, treating violations as unfair or deceptive practices . Periodic risk reviews, as suggested by the EU, require platforms to continuously assess the risks minors face on their services and the effectiveness of their mitigation measures .

The Responsibility of the Tech Industry

While governments set the rules, technology companies are on the front lines of implementation. The consensus from experts and UN bodies is that safety cannot be an afterthought; it must be integrated into the very fabric of AI systems from the outset.

A. Safety-by-Design

This principle, championed by UNICEF, means proactively building safeguards into AI models and applications, not just reacting to problems after they occur . For developers, this involves:

-

Robust Guardrails: Programming AI models to refuse to generate harmful, abusive, or sexually explicit content, especially when it involves minors .

-

Data Governance: Ensuring training datasets are clean and free from contaminated, biased, or illegal content. This also involves protecting children’s data privacy throughout the AI supply chain .

-

Algorithmic Transparency: Designing recommender systems that do not exploit children’s vulnerabilities. The EU DSA guidelines suggest prioritizing explicit user signals over behavioral data for minors and empowering them to control their own feeds .

B. Proactive Content Moderation

Digital companies must shift from merely removing abusive content to actively preventing its circulation. This requires significant investment in advanced detection technologies that can identify AI-generated CSAM immediately, rather than relying on reports from victims that can take days to process . This includes developing tools to detect “nudified” images and other deepfake abuses.

C. Engaging as Partners

Cosmas Zavazava of the ITU emphasizes that the private sector is a crucial partner in this fight. He notes that while tech giants may have initially been concerned about stifling innovation, the message is clear: responsible deployment of AI is compatible with profitability and market share. Regular dialogue between UN bodies and industry leaders is essential to raise red flags and collaboratively develop solutions that protect populations, especially children .

Empowering Parents and Caregivers

Regulations and corporate responsibility form the structural backbone of child safety, but the day-to-day reality happens at home. Parents and caregivers are the first line of defense and must be equipped with the knowledge and tools to navigate this complex landscape.

A. Building Digital and AI Literacy

A major hurdle identified by UN bodies is the widespread “AI-illiteracy” among parents, teachers, and even policymakers . To protect children, adults must first understand the technology. This includes learning how AI works, its potential biases, and its risks. Resources like UNICEF’s AI guide for parents and guides from organizations like the BBB National Programs’ Children’s Advertising Review Unit (CARU) are invaluable for building this foundational knowledge .

B. Practical Steps for At-Home Safety

Experts recommend several concrete actions families can take immediately to create a safer digital environment :

-

Manage Privacy and Security Settings:

-

Review and tighten privacy settings on all apps, games, and devices. Turn off microphones and cameras when not in use.

-

Disable in-app purchases or remove saved payment methods from devices to prevent unwanted or manipulative spending.

-

Use ad blockers where available and consider ad-free versions of services to reduce exposure to manipulative marketing .

-

-

Foster Open Communication:

-

Talk regularly with children about their online experiences, the apps they use, and the difference between AI-generated content and reality.

-

Encourage a healthy skepticism. Teach children that AI can be wrong, biased, or misleading and practice simple fact-checking together .

-

Co-view and co-play when possible. Engaging with technology together turns screen time into a shared family experience and opens doors for conversation.

-

-

Establish Family Rules:

-

Create a Family Online Safety Agreement that outlines expectations for internet use, time limits, and approved platforms .

-

Set clear boundaries for AI tools and ensure children know they can come to you if they encounter anything that makes them feel scared, uncomfortable, or confused.

-

Prepare for mistakes by learning how to use platform reporting tools and showing children how to use them .

-

The Role of Educators and Schools

Educators are on the front lines, observing children’s behavior and development daily. They play a pivotal role in both prevention and intervention.

A. Integrating AI Safety into Curriculum

Schools must move beyond basic internet safety and incorporate AI literacy into their curricula. This includes teaching students:

-

How to identify AI-generated content (deepfakes, fake profiles).

-

The importance of protecting personal data.

-

The ethical implications of using AI tools.

-

How to recognize online exploitation and grooming tactics .

B. Utilizing Available Resources

Organizations like the U.S. Department of Homeland Security’s Know2Protect campaign offer a wealth of free resources specifically designed for educators . These include:

-

Tips2Identify Exploitation and Abuse for Educators: Fact sheets to help staff recognize the signs of abuse.

-

Classroom Activities: Crosswords, word searches, and bingo games that teach online safety terms in an engaging way.

-

“Top 10 Tips2Protect for Teens”: Posters and discussion guides to facilitate age-appropriate conversations in the classroom.

-

Resources for Parents: Handouts like internet safety checklists and first-day-of-school picture signs that can be sent home to families, reinforcing the school’s message .

Conclusion: A Call for Collective Action

Protecting minors from the risks of AI content is not a challenge that any single group can solve alone. It demands a unified, global effort. The framework for safety is being built through the combined actions of international bodies like the UN, national governments enacting robust laws, and technology companies committing to safety-by-design .

Yet, the most critical components of this safety net are at the community and family level. An AI-literate populace where parents, teachers, and children themselves understand both the power and the peril of these new tools is the ultimate safeguard. As UNICEF powerfully stated, “Children cannot wait for the law to catch up” . The harm is happening now. By fostering open dialogue, demanding accountability from tech giants, and implementing practical safety measures at home and school, we can work together to ensure that the digital world remains a place of opportunity and wonder for every child, not a source of hidden danger.