The artificial intelligence revolution has always been defined by speed faster training times, lower latency inference, and exponential growth in floating-point operations. For years, the semiconductor industry operated under a simple premise: the chip with the most teraFLOPS wins. But as we navigate through 2026, a fundamental truth has emerged that is reshaping the entire landscape of computing. The performance race has hit a wall, and that wall is security. The very chips designed to accelerate intelligence have become the new frontier for cyber warfare, intellectual property theft, and geopolitical maneuvering. AI accelerators from the GPUs powering massive cloud clusters to the tiny NPUs in our laptops are no longer just engines of computation. They are fortresses that must be defended, or vulnerabilities that could bring down trillion-dollar enterprises and national infrastructure alike .

This comprehensive article explores the multifaceted world of AI accelerator chip security. We will dissect the vulnerabilities inherent in these specialized processors, examine the cutting-edge defensive architectures being deployed by industry leaders, analyze the shifting geopolitical landscape driven by sovereign AI initiatives, and project the future of secure computing as we move toward an era of autonomous agents and post-quantum threats. The stakes have never been higher, and understanding the silicon-level arms race is essential for anyone involved in technology, business, or national security.

The Expanding Attack Surface: Why AI Chips Are Vulnerable

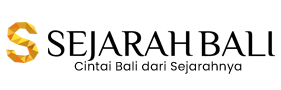

The security community has long focused on software vulnerabilities buffer overflows, injection attacks, and authentication bypasses. However, the deep integration of AI into critical infrastructure has forced a paradigm shift. Attackers are now looking beneath the operating system, beneath the firmware, and into the very silicon itself. The complexity of modern AI accelerators, which are essentially heterogeneous System-on-Chips (SoCs) packed with specialized cores, high-bandwidth memory, and high-speed interconnects, creates an exponentially larger attack surface than traditional processors .

Hardware-Level Vulnerabilities

At the most fundamental level, the physical hardware of an AI accelerator can be compromised. Unlike software bugs that can be patched remotely, hardware vulnerabilities are often permanent and require costly respins or even complete device replacement. Recent research has identified that AI accelerators are inherently vulnerable to various attacks stemming from multiple sources. The models themselves can be manipulated; attackers can induce malfunction through minor perturbations to inputs or by directly attacking model parameters stored in memory .

The memory subsystems within accelerators are particularly susceptible. High-bandwidth memory (HBM) and traditional DRAM cells are vulnerable to Row Hammer attacks, where repeated access to one row of memory causes bit flips in adjacent rows. This physical phenomenon, exploited maliciously, can corrupt model weights or alter computation results without any software vulnerability being present .

Furthermore, timing violations can be deliberately induced to cause entire designs to malfunction. Attackers can exploit the precise timing requirements of high-speed AI accelerators to push signals just beyond their setup and hold windows, creating computational errors that can cascade through neural network layers .

The Threat of Hardware Trojans

One of the most insidious threats in the AI accelerator space is the Hardware Trojan (HT). These are malicious circuits secretly inserted into a design during the manufacturing or design process. The danger is compounded by the modern fabless semiconductor model, where chips are designed in one country, manufactured in another, and assembled in a third. Each step in this globalized supply chain presents an opportunity for insertion .

Hardware Trojans typically consist of a trigger and a payload. During normal operation, the trigger monitors specific signals or conditions. When these conditions are met perhaps a specific input pattern or a counter reaching a certain value the trigger activates the payload, which then executes its malicious function. This could range from leaking sensitive data through a covert channel to completely disabling the chip at a critical moment .

For FPGAs, which are often used for AI acceleration in edge devices, attackers can manipulate Look-Up Tables (LUTs) to execute attacks or embed Trojans in dark silicon regions areas of the chip that are powered but unused achieving stealthy attacks with zero resource overhead. Alternatively, Trojans can be inserted by modifying the configuration bitstream file that programs the FPGA . For ASICs, automated Hardware Trojan generation frameworks now exist that can systematically insert Trojans into a given netlist, enabling attackers to explore the attack surface and embed malicious logic that is incredibly difficult to detect during post-manufacturing testing .

Supply Chain and Manufacturing Manipulation

Beyond deliberate Trojan insertion, the manufacturing process itself can introduce vulnerabilities through process variation. Research into post-CMOS devices like Spin-Orbit Torque Magnetic Tunnel Junctions (SOT-MTJs) has revealed a disturbing vulnerability profile. An adversary without access to the circuit netlist could differentially influence the behavior of machine learning applications through global modification of oxide thickness during manufacturing .

This manufacturing-side intrusion can result in bit-flips of the weights stored in crossbar arrays. In one study, with just 0.05% of bits in a crossbar having a flipped resistance state, certain digits in the MNIST dataset showed significantly higher error rates than others. Critically, this was achieved without modifying the netlist or having access to the circuit design itself the adversary simply altered the manufacturing process parameters . This type of attack is particularly dangerous because it leaves no obvious signature in the logic design and can be dismissed as normal process variation during post-manufacturing inspection.

The Automated Generation Platform Paradox

To accelerate time-to-market and enable rapid deployment of AI accelerators on IoT and edge devices, the industry has embraced automated AI accelerator generation platforms. These platforms allow software engineers to provide a trained model and receive a corresponding hardware design, treating the accelerator-generation process as a closed box. While this significantly reduces development workload, it creates a new and largely unexplored security risk .

In these automated platforms, attackers can embed malicious code within the platform components themselves. During the design and generation phase, the platform receives information about the model structure and parameters. An adversary with access to the platform perhaps a malicious insider at the platform vendor can analyze model vulnerabilities and insert Hardware Trojans into the generated design. Simultaneously, the software generation flow can be compromised to inject malicious instructions into the communication protocol between the controller and the accelerator, effectively creating a remote trigger for the hardware Trojan .

The exploration algorithms within these platforms, such as Design Space Exploration (DSE) engines, can be repurposed for malicious ends. These algorithms are designed to find optimal system parameter settings; an attacker can reuse them to explore target parameter values for an attack, or insert malicious exploration algorithms that identify the most vulnerable points in the neural network to target .

Architectural Defenses: Building the Silicon Fortress

In response to these escalating threats, the semiconductor industry has embarked on an ambitious effort to redesign AI accelerators with security as a primary design constraint, not an afterthought. The year 2026 marks a turning point where Confidential Computing has moved from a niche enterprise feature to a mainstream requirement for AI hardware .

Trusted Execution Environments at Rack Scale

NVIDIA’s newly launched Vera Rubin platform exemplifies this shift. Succeeding the Blackwell architecture, the Rubin NVL72 introduces the industry’s first rack-scale Trusted Execution Environment (TEE). Previous generations secured individual chips, but trillion-parameter models must be distributed across dozens of GPUs. The Rubin architecture extends protection across the entire NVLink domain, creating a unified security perimeter for the entire rack .

Through the BlueField-4 Data Processing Unit (DPU) and the Advanced Secure Trusted Resource Architecture (ASTRA), NVIDIA provides hardware-accelerated attestation. This ensures that model weights are only decrypted within the secure memory space of the Rubin GPU, and that the integrity of the entire computation can be verified by remote parties. Even if the host operating system is compromised, the AI workload running within the TEE remains confidential and tamper-proof .

AMD has countered with its Instinct MI400 series and the Helios platform, leveraging SEV-SNP (Secure Encrypted Virtualization-Secure Nested Paging) technology. The MI400 features up to 432GB of HBM4 memory, where every byte is encrypted at the controller level. This prevents cold boot attacks and memory scraping, which were theoretical vulnerabilities in earlier AI hardware. AMD’s approach emphasizes open standards and is gaining traction among governments and sovereign AI initiatives wary of proprietary ecosystems .

Intel’s Jaguar Shores, a next-generation AI accelerator designed for secure enterprise inference, utilizes Trust Domain Extensions (TDX) and a new feature called TDX Connect. This provides a secure, encrypted PCIe/CXL 3.1 link between the Xeon processor and the accelerator, ensuring that data moving between the CPU and GPU is never visible to the system bus in plaintext. This represents a significant advance over software-level encryption, which added latency and was susceptible to side-channel attacks .

The Hardware Off-Switch Concept

An emerging paradigm in AI accelerator security is the concept of a hardware “off-switch.” This is not a single button but a layered architecture that can halt, fence, or degrade an accelerator’s capabilities deterministically, independent of the main operating system, even under partial compromise .

The off-switch architecture spans multiple levels of the hardware stack:

-

Root of Trust and Lifecycle States: Every secure accelerator begins with an immutable boot ROM and device-unique keys burned via eFuses or Physically Unclonable Functions (PUFs). The ROM verifies first-stage firmware, enforces lifecycle states (development, production, RMA), and permanently disables invasive debug interfaces in production mode .

-

Secure Boot with Anti-Rollback: Firmware and microcode must be measured and verified against monotonic counters. This prevents attackers from reflashing a vulnerable older image to bypass security policies. An off-switch that reboots into an exploitable image is worthless, so anti-rollback protection is essential .

-

Internal Privilege Hierarchy and Partitioning: Modern AI chips contain numerous engines tensor cores, schedulers, DMA units, and encoders. A hardware privilege hierarchy ensures that a minimal security monitor, running on a dedicated microcontroller within the device, can preempt or fence the rest of the chip. Technologies like SR-IOV or MIG (Multi-Instance GPU) partitions isolate tenants, providing each with dedicated engines, queues, and memory slices backed by per-context page tables and memory encryption. Off-switch commands can then target a single partition, all partitions, or the entire device .

-

IOMMU and DMA Firewalls: Accelerators must never issue bus transactions to arbitrary host physical addresses. Enforcing per-context I/O page tables within the accelerator, backed by an external IOMMU, creates a critical boundary. A hardware panic fence can tear down all outstanding DMA mappings on a kill event, drop in-flight transactions, and zero device-side address translation caches, ensuring that a compromised accelerator cannot be used to attack the host system .

-

Memory Encryption and Zeroization: Encrypting model weights and activations at rest in device memory using keys that never leave on-chip secure islands is now standard practice. On an off-switch event, rapid zeroization of key ladders and scrubbing of critical pages can be triggered, using DMA engines repurposed for high-bandwidth wipe operations .

-

Deterministic Preemption and Watchdogs: Neural network kernels can be long-running. Implementing preemption points in scheduler microcode and enforcing hardware watchdogs per queue or partition ensures that if a kernel exceeds its time slice or exhibits forbidden behavior, the device automatically preempts or resets the partition. A global watchdog, controlled only by the security core, can escalate to device-wide reset if necessary .

Side-Channel and Fault Injection Countermeasures

Advanced attackers are increasingly turning to side-channel attacks exploiting information leaked through power consumption, electromagnetic emissions, or timing variations and fault injection attacks, where glitches in clock or voltage supply cause computational errors that reveal secrets.

Modern secure accelerators incorporate constant-time cryptographic blocks for attestation, Error-Correcting Code (ECC) across memories and interconnects, and dedicated sensors for detecting clock and voltage glitches, temperature anomalies, and tampering attempts. These sensors feed into the off-switch policy engine, allowing the chip to trigger protective measures when attack conditions are detected .

Secure Telemetry and Sealed Logs

For forensic analysis after a security incident, accelerators now include a tiny always-on domain that stores event digests in a protected buffer, signed by the device key. On reboot, the host can fetch these sealed logs to understand if an off-switch event was triggered by policy, operator command, or tamper detection. This capability is essential for compliance in regulated industries and for improving security postures over time .

The NPU Revolution: Security at the Edge

While data center GPUs dominate headlines, the integration of Neural Processing Units (NPUs) into client devices laptops, smartphones, and IoT gadgets is democratizing AI and bringing new security capabilities to the endpoint. Intel, AMD, and Qualcomm are embedding NPUs into their PC processors, and these units are proving to be powerful tools for security applications .

Offloading Security Workloads

Security software that traditionally consumed significant CPU resources can now be offloaded to the NPU. Acronis Cyber Protect Cloud, for example, analyzes behavioral patterns on PCs to detect ransomware and zero-day exploits. By using the NPU in Intel’s Core Ultra chips via Intel’s OpenVino software, the solution offloads heavy AI tasks such as behavioral heuristics and anomaly scoring. This frees up to 92 percent of CPU resources, improving system performance and extending laptop battery life while maintaining robust security .

On-Device Phishing Protection

Bufferzone’s Safe Workspace platform provides anti-phishing protection that surpasses built-in browser defenses. Operating as a lightweight browser extension, it analyzes web pages using deep-learning AI models running on the NPU or GPU. Because the analysis happens on-device rather than in the cloud, it has 70 percent less latency than cloud-based approaches and never sends potentially sensitive browsing data to external servers. This privacy-preserving security model is increasingly attractive for enterprise and consumer applications .

Deepfake Detection

As AI-generated content becomes more sophisticated, detecting deepfakes has become a priority. McAfee’s Deepfake Detector runs on NPUs with at least 40 trillion operations per second (TOPS). It detects AI-generated audio in video streams playing in web browsers, providing real-time warnings to users without impacting system performance. This capability, running locally on the NPU, ensures that users can verify content authenticity without sending private conversations to the cloud for analysis .

Digital Fingerprinting at Scale

At the data center level, NVIDIA’s Morpheus software development kit leverages GPUs to detect deviations in behavior across every user, service, account, and system. Using digital fingerprinting AI workflows and data collected by BlueField DPUs, Morpheus achieves 100 percent visibility across the data center and accelerates threat detection from weeks to minutes. This demonstrates how AI accelerators are not just securing themselves but are actively securing the entire infrastructure around them .

Geopolitics and Sovereignty: The New World Order of AI Hardware

The security of AI accelerators has transcended technical concerns to become a matter of national strategy. The year 2026 has witnessed the definitive end of AI globalism and the rise of the Sovereign AI movement, where nations treat artificial intelligence as a critical component of state infrastructure rather than a commercial product .

The SAFE Chips Act and Export Controls

The passage of the Secure and Feasible Exports (SAFE) of Chips Act of 2025 codified the Silicon Fortress strategy into law. Unlike previous executive orders that could be modified unilaterally, the SAFE Chips Act introduces a statutory 30-month freeze on exporting the most advanced AI architectures including NVIDIA’s Rubin series to foreign adversary nations. This legislative lockdown ensures that chip denial is a permanent fixture of national security policy, not a temporary measure subject to diplomatic negotiation .

The act mandates domestic control over the entire AI stack, from raw silicon to model weights. Software hooks embedded in hardware allow governments to track the real-time location and utilization of high-end GPUs, creating unprecedented visibility into the global flow of computing power. This telemetry capability ensures that sanctioned nations cannot quietly acquire advanced AI chips through intermediaries .

Sovereign-as-a-Service and National AI Factories

The primary commercial beneficiaries of this geopolitical shift have been Sovereign-as-a-Service providers. NVIDIA has successfully pivoted from component supplier to national infrastructure partner, with CEO Jensen Huang famously declaring that “AI is the new oil.” The company’s 2026 projections suggest over $20 billion in revenue will come from building National AI Factories in regions like the Middle East and Europe turnkey sovereign clouds that guarantee data residency and legal jurisdiction to the host nation .

Oracle and Microsoft have expanded their Sovereign Cloud offerings, providing governments with air-gapped environments that meet stringent regulatory requirements. Domestic memory manufacturers like Micron are seeing record demand as nations scramble to secure every component of the hardware stack. Meanwhile, companies with heavy reliance on globalized supply chains, such as ASML, must navigate a complex dual-track market, producing restricted Sovereign-compliant tools for Western markets while managing strictly controlled exports elsewhere .

The Balkanization of AI and the Rise of Open Source

The Sovereign AI movement has created a two-tier world: Intelligence-Rich nations with domestic fabs and Intelligence-Poor nations that must lease compute at high costs. This dynamic threatens to exacerbate global inequality and has sparked concerns about the fragmentation of AI development. Leading researchers, including former OpenAI co-founder Ilya Sutskever, have noted that this fragmentation may hinder global safety alignment, as different nations develop siloed models with divergent ethical guardrails and technical standards .

In response, European and Asian researchers are increasingly turning to open-source RISC-V architectures. By bypassing U.S. proprietary control and the restrictions of the SAFE Chips Act, these communities hope to maintain access to advanced computing capabilities without geopolitical entanglement. This trend could fundamentally reshape the semiconductor landscape over the coming decade, creating alternative ecosystems that compete with established proprietary architectures .

Production for Security as Economic Strategy

The concept of “production for security” or “prosec” has emerged as a guiding principle for investment in 2026. Nations are prioritizing domestic production capacity for chips, data centers, and AI infrastructure, recognizing that reliance on foreign sources for these critical components represents an unacceptable strategic vulnerability. This focus extends beyond semiconductors to encompass the electricity generation needed to power AI factories and the processing and refining of rare earth minerals essential for advanced packaging .

Regulatory Frameworks and Compliance

As AI accelerators become more central to critical infrastructure, governments and standards bodies have moved to establish comprehensive security requirements. These regulations are shaping product development and creating compliance obligations for chip designers, system integrators, and AI deployers.

China’s Technical Specifications for AI Accelerator Security

In January 2026, China’s National Cybersecurity Standardisation Technical Committee (TC260) adopted the Network Security Standard Practice Guide Technical Specifications for Security Functions of Artificial Intelligence Acceleration Chips. This document provides systematic security technical requirements and assessment methods for AI acceleration chips, focusing on seven key dimensions :

A. Hardware Security: Requirements for physical protection mechanisms, including tamper resistance and side-channel countermeasures.

B. Interface Security: Protection for all external communication interfaces against unauthorized access and data leakage.

C. Firmware Security: Secure boot, authenticated updates, and integrity verification for all programmable elements.

D. Secure Storage Units: Protection for on-chip and off-chip storage of sensitive data, including encryption and access controls.

E. Cryptographic Technology Mechanisms: Mandated use of approved cryptographic algorithms for all security functions.

F. Fault Detection and Diagnosis: Capabilities to detect and respond to hardware faults that could indicate attack attempts.

G. Data Protection: Comprehensive protection for AI models, training data, and inference results throughout their lifecycle.

The specification classifies security functional requirements into Basic Level and Enhanced Level, allowing for graduated implementation based on application criticality. This framework provides a foundation for security evaluation and certification of AI hardware in the Chinese market .

International Standards and the EU AI Act

The EU AI Act, now in full effect, creates legal requirements for privacy-preserving machine learning and data protection during inference. Confidential computing technologies provide the technical solution to these regulatory demands, allowing multiple parties to train on shared datasets without exposing raw data. The act’s risk-based approach means that AI systems deployed in high-risk applications must use hardware with verifiable security properties .

Future Horizons: Post-Quantum and Agentic AI

Looking toward the late 2020s and beyond, the security landscape for AI accelerators will continue to evolve in response to emerging threats and new computing paradigms.

Post-Quantum Cryptography Integration

The threat of quantum computing looms over current cryptographic protections. While today’s hardware encryption is robust against classical attacks, the “harvest now, decrypt later” strategy used by some threat actors poses a long-term risk. Encrypted data captured today could be decrypted years in the future when quantum computers become available .

The industry is beginning to integrate post-quantum cryptography (PQC) into the hardware root of trust. Lattice-based cryptography, which is believed to be resistant to quantum attacks, will secure the attestation process for future AI accelerators. The challenge lies in implementing these complex algorithms without sacrificing the extreme low latency required for real-time AI inference. By 2028, we can expect to see PQC integrated into commercial AI hardware, ensuring that today’s secrets remain secure tomorrow .

Agentic Governance and Autonomous Systems

As AI transitions from passive chatbots to autonomous agents capable of managing physical infrastructure power grids, financial markets, transportation systems the security requirements for the underlying hardware will become even more stringent. The proposed Agentic OS Act of 2027 would mandate that any AI agent operating in critical sectors must run on a sovereign-certified operating system and domestic hardware .

These autonomous systems will require new security properties, including:

-

Guaranteed Isolation: Agents must be unable to interfere with each other or with the underlying control systems.

-

Verifiable Decision Trails: All actions must be auditable, with cryptographic proof of integrity.

-

Fail-Safe Mechanisms: Hardware-enforced limits must prevent agents from taking actions that could cause physical harm or system instability.

-

Secure Multi-Party Computation: Enabling multiple stakeholders to benefit from shared AI capabilities without revealing proprietary data.

The Challenge of Universal Attestation

As the industry matures, there is growing momentum behind Universal Attestation a cross-vendor standard that allows a model to be verified as secure regardless of whether it runs on NVIDIA, AMD, or Intel hardware. This would commoditize AI hardware security and shift the focus back to the efficiency and capability of the models themselves. However, achieving consensus on attestation protocols and establishing the necessary trust infrastructure remains a significant challenge .

Balancing Security and Performance

Throughout this exploration of AI accelerator security, one theme recurs: the tension between security and performance. Security features consume power, add latency, and increase design complexity all factors that run counter to the primary goals of AI accelerator design. The industry’s response has been to integrate security deeply into the architecture, rather than bolting it on as an afterthought .

The Cost of Insecurity

The calculus is shifting because the cost of insecurity has become too high to ignore. Model weights represent trillions of dollars in research and development investment. The loss of a proprietary model to a competitor or adversary could bankrupt a company. Physical safety depends on the integrity of AI systems controlling autonomous vehicles, industrial robots, and medical devices. Regulatory non-compliance can result in massive fines and market exclusion .

Performance-Positive Security

Forward-thinking designers are now pursuing performance-positive security features that not only protect but actually enhance efficiency. Memory encryption, for example, can double as a form of wear leveling for certain memory technologies. Secure partitioning enables more efficient multi-tenancy by providing stronger isolation guarantees, allowing cloud providers to pack workloads more densely. Hardware root of trust simplifies attestation and compliance verification, reducing the overhead of security audits .

Classifying Workloads and Right-Sizing Protections

A one-size-fits-all approach to security is neither efficient nor effective. The industry is moving toward workload-classified security profiles, where different applications receive different levels of protection based on their risk profile:

-

High-Assurance Profile (medical devices, factory robots, automotive): Strong isolation, strict watchdogs, aggressive DMA fences, low power caps, and detailed logging. Throughput is secondary to guaranteed safety and security.

-

Enterprise Profile (cloud inference, corporate AI): Confidential computing, secure boot, tenant isolation, and attestation. Balanced for performance while meeting compliance requirements.

-

Consumer Profile (edge devices, personal assistants): On-device processing, privacy protection, and low-overhead security. Optimized for user experience and battery life.

This graduated approach ensures that security resources are allocated where they are most needed, avoiding unnecessary overhead for low-risk applications while providing robust protection for critical systems .

Conclusion: The Silicon Imperative

As we progress through 2026, the message from the semiconductor industry, the security community, and national governments is unequivocal: security must be designed into AI accelerators from the transistor up, not added as an afterthought. The era of trusting software to protect hardware is over; we now rely on hardware to protect software, data, and the very models that define the AI revolution .

The transition to hardware-level AI security represents one of the most significant milestones in computing history. By moving the root of trust from software to silicon, the industry has solved the fundamental paradox of the cloud: how to share resources without sharing secrets. The architectures introduced this year by NVIDIA, AMD, Intel, and others have turned the high-bandwidth memory and massive interconnects of AI clusters into unified, secure environments where the world’s most valuable digital assets can be safely processed .

Yet challenges remain. The complexity of these chips makes independent auditing difficult. The globalization of the semiconductor supply chain creates opportunities for insertion of undetectable backdoors. The fragmentation of the global AI market into sovereign silos threatens to slow innovation and create incompatible security standards. And the looming threat of quantum computing means that today’s strongest protections may be obsolete within a decade .

For organizations deploying AI, the message is clear: security due diligence must extend to the hardware level. Questions about secure boot, memory encryption, attestation capabilities, and supply chain integrity should be standard parts of any procurement process. The chips powering AI are no longer just components; they are the foundation of digital trust .

The next few years will likely see the emergence of Confidential-only cloud regions, the widespread adoption of Universal Attestation standards, and the integration of post-quantum cryptography into commercial hardware. As AI becomes embedded in national infrastructure, healthcare, autonomous vehicles, and mission-critical systems, the chips powering this intelligence must be robust, not just powerful. The revolution must be constructed on a foundation of firm building blocks, not silicon magic .

We have taught machines to think intelligently. Now we must ensure that trust begins at the transistor. After all, what good is intelligence, artificial or otherwise, if it can be hijacked at the hardware level?