The enigmatic nature of cats has captivated humans for thousands of years. From the aloof gaze of a lounging tabby to the insistent meow at dawn, cat owners constantly find themselves wondering: “What is my cat feeling right now?” This ancient question is finally receiving a futuristic answer through the development of real-time cat emotion detectors. Leveraging breakthroughs in artificial intelligence (AI), machine learning, and computer vision, these innovative tools are beginning to bridge the communication gap between humans and their feline companions .

This comprehensive article delves deep into the world of real-time cat emotion detection. We will explore the technology that powers it, the scientific research underpinning it, the various methods used from analyzing facial expressions to decoding vocalizations and the implications of this technology for pet welfare and the human-animal bond.

Table of Contents

-

The Science of Feline Emotions

-

The Engineering Approach to Emotion Estimation

-

Decoding the Face: Cat Facial Expression Recognition

-

Listening to Whiskers: Vocalization Analysis

-

The Body Speaks: Posture and Movement Analysis

-

Multi-Modal Systems

-

Leading Technologies and Real-World Applications

-

Challenges and Skepticism

-

The Future of Cat-Human Communication

-

Conclusion

The Science of Feline Emotions

Before we can build a machine to detect cat emotions, we must first understand what emotions cats are capable of experiencing. While cats cannot tell us how they feel, extensive ethological research suggests they experience a range of basic emotions. These are typically categorized by valence (positive or negative) and arousal (high or low).

A. Positive Emotional States:

-

Contentment: Often associated with purring, slow blinking, and a relaxed posture.

-

Playfulness: Characterized by the “play bow,” pouncing, and chasing behaviors.

-

Affection: Demonstrated through rubbing, kneading, and seeking physical proximity.

B. Negative Emotional States:

-

Fear: Evidenced by hiding, freezing, dilated pupils, and flattened ears.

-

Anxiety: Shown through restlessness, excessive grooming, and tail flicking.

-

Pain/Anger: Indicated by hissing, growling, swatting, and an arched back .

The challenge for any emotion detection system is to accurately distinguish between these states in real-time. Humans are often poor at this, especially when it comes to subtle negative cues. A 2025 study published in Frontiers in Ethology found that while people were good at recognizing overt cat behaviors, their ability to recognize subtle negative cues was only slightly better than chance . This is where AI steps in.

The Engineering Approach to Emotion Estimation

The demand for automated emotional estimation technology is driven by the limitations of human observation. Human judgment can be affected by cognitive biases, anthropomorphism (attributing human emotions to cats), and simple inattention . Engineers and computer scientists are addressing these issues by developing systems that can see and hear what humans miss.

A. The Role of Deep Learning and Neural Networks:

At the heart of modern emotion detectors are deep learning models, particularly Convolutional Neural Networks (CNNs). These networks are trained on massive datasets of images, videos, and audio files. Instead of following explicit programming rules, they learn to identify patterns associated with specific emotions. For example, a CNN trained on cat faces learns to associate the subtle tightening of muscles around the eyes or the flattening of the ears with a state of alarm or fear .

B. From Pixels to Predictions:

The process typically involves several steps:

-

Data Acquisition: A camera or microphone captures raw data (a video frame or a sound clip).

-

Pre-processing: The system isolates the subject (the cat’s face or body) from the background and normalizes the data for analysis.

-

Feature Extraction: The AI model identifies key features, such as facial landmarks (corners of the mouth, eyes, ear tips), body posture points, or acoustic features of a meow (pitch, frequency, duration).

-

Classification: The extracted features are fed into a classifier that maps them to a specific emotional state, often with a confidence score .

Decoding the Face: Cat Facial Expression Recognition

Facial Expression Recognition (FER) is one of the most promising avenues for real-time emotion detection. Just as humans have subtle micro-expressions, cats’ faces change in ways that can be scientifically categorized.

A. Convolutional Neural Networks in Action:

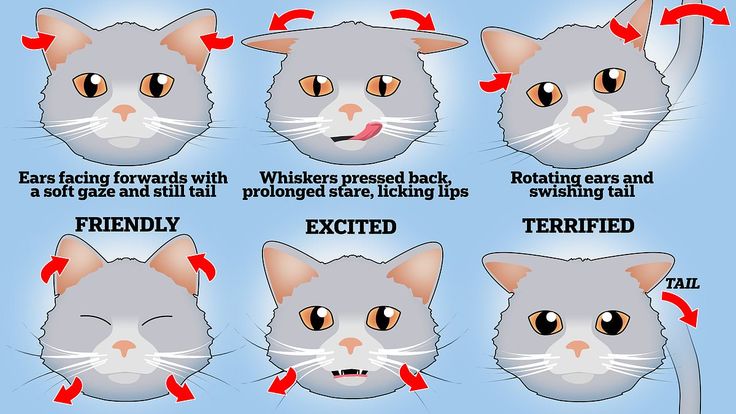

Research has demonstrated the effectiveness of CNNs in classifying cat facial expressions. A 2024 study presented a real-time model that could identify four distinct emotional states from cat faces: Pleased, Angry, Alarmed, and Calm . The system works by analyzing the configuration of the cat’s ears, eyes, whiskers, and mouth. For instance:

-

Pleased: Often involves relaxed eyes, forward whiskers, and a neutral mouth.

-

Alarmed: Characterized by dilated pupils, flattened ears (airplane ears), and tense facial muscles.

B. Landmark-Based Precision:

Advanced systems go a step further by identifying specific points on the cat’s face. ConductVision, a technology profiled for its animal emotion recognition capabilities, uses over 30 facial landmarks on animals like cats to build precise “emotion maps” . This allows the AI to detect asymmetries or subtle tensions that a human observer might overlook, such as the specific way a cat squints when in pain versus when content.

Listening to Whiskers: Vocalization Analysis

A cat’s meow is a complex signal, not just a simple noise. While adult cats primarily meow to communicate with humans, the variations in these vocalizations carry significant meaning.

A. From Meow to Spectrogram:

Real-time emotion detectors analyze vocalizations by converting sound waves into visual representations called spectrograms. These spectrograms plot frequency, pitch, and volume over time, creating a unique “fingerprint” for each sound .

B. AI-Powered Vocalization Databases:

Startups and tech giants are feeding thousands of these feline vocalization fingerprints into deep-learning models.

-

Feline Glossary Classification 2.3: This tool claims to identify over 40 distinct cat call types across five behavioral categories .

-

MeowTalk and CatSound: Foundational projects like the CatSound database and the MeowTalk app have paved the way, with early models achieving over 90% accuracy in classifying basic vocalization types, distinguishing between a “feed me” meow and an “I’m in pain” yowl .

-

Baidu’s Multimodal Patent: Chinese technology company Baidu has filed a patent for a system that fuses audio data with motion and biometric data to better parse feline emotion, recognizing that context is key .

The Body Speaks: Posture and Movement Analysis

While the face and voice are critical, the cat’s entire body is a canvas of communication. A twitching tail, an arched back, or a slow blink can convey volumes.

A. Tail Posture and Spine Curvature:

Projects like FeelFur, a real-time pet emotion recognition system, have focused heavily on body-based analysis. FeelFur’s core classification model centers on feline tail posture and spine curvature to define emotional states such as Overjoyed, Friendly, Neutral, Anxious, and Fearful . This approach is often more consistent across different cat breeds than facial recognition, as breed-specific facial features (like a flat Persian face) can sometimes confuse facial analysis models.

B. Pose Estimation Tools:

To train these models, developers use advanced markerless pose estimation tools like DeepLabCut. These tools can automatically track the position of a cat’s paws, head, tail, and spine in a video, generating thousands of data points that describe the animal’s posture in every frame. This data is then used to teach the AI what a “fearful” posture looks like versus a “playful” one .

Multi-Modal Systems

The most sophisticated real-time cat emotion detectors do not rely on a single data source. They are multi-modal systems that combine facial expression, vocalization, and body posture analysis for a more holistic and accurate interpretation .

A. The Power of Context:

Imagine a cat with its ears flattened (a sign of fear) but its tail held high (a sign of confidence). A uni-modal system might be confused. A multi-modal system, however, can weigh these conflicting signals. It might also incorporate data from wearable sensors (biometric data like heart rate) to determine if the cat is in a state of high arousal .

B. Integration with Smart Hardware:

These software systems are increasingly being integrated into hardware. The FeelFur project, for example, combines a smart tracking camera with a React-based web dashboard and a mobile app. The camera streams video to a backend server where a dual-model system (facial and body-based) analyzes the frames in real-time, sending emotional insights directly to the owner’s phone . This shifts pet monitoring from passive observation (“Is my cat in the room?”) to active emotional awareness (“Is my cat anxious right now?”).

Leading Technologies and Real-World Applications

The concept of a “Real-Time Cat Emotion Detector” is not just theoretical. Several platforms and projects are bringing this technology to life, focusing on both commercial applications and animal welfare.

Anima – Universal Pet Communication Platform:

Anima is a comprehensive React application that goes beyond cats to enable communication with all animals. Its core features include multi-species vocalization analysis (meows, purrs, chirps), a training system that learns from individual pets, and a foundation built on over 51 peer-reviewed research papers. It provides a visual spectrogram display and confidence scoring for emotional state interpretation .

FeelFur:

As discussed, FeelFur is specifically designed to address the emotional disconnect between owners and pets, particularly during separations. It offers a rotating emotion wheel, live emotion stream charts, and historical trend recording, providing a comprehensive emotional profile of the pet over time .

ConductVision:

This tool focuses on the science-backed analysis of animal faces. It uses deep learning to identify subtle facial expressions that might go unnoticed by humans, enhancing accuracy across species and minimizing human bias .

Intellipig and Agricultural Applications:

While focused on pigs, the Intellipig system is a prime example of how this technology can be applied in a practical setting. Developed by researchers at UWE Bristol and SRUC, it analyzes photos of pigs to detect signs of pain or distress, alerting farmers so they can intervene. This demonstrates the potential for similar systems to be used in veterinary clinics or shelters to monitor cat welfare 24/7 .

Challenges and Skepticism

Despite the rapid advancements, the field of animal emotion AI is not without its challenges and vocal skeptics.

A. The Fantasy vs. Reality of Translation:

Psychologist Kevin Coffey, the creator of the DeepSqueak system (which deciphers rodent vocalizations), warns against overhyping these technologies. He argues that the “animal communication space is defined by the concepts important to them food, safety, play not complex human concepts.” The idea that AI can translate a cat’s meow into a full sentence of human language is, in his view, “total nonsense.” Instead, these tools are better at identifying basic biological drives and states .

B. Breed and Individual Variability:

A system trained on Siamese cats might not perform well on a Maine Coon. There is significant variability in facial structure, vocalization patterns, and behavior across different cat breeds, and even between individual cats. An effective emotion detector must be adaptable to these differences .

C. Environmental Factors:

Lighting, background noise, and camera angle can all affect the accuracy of real-time analysis. A model trained on high-quality studio images may fail when faced with a grainy, low-light video from a home security camera.

D. Misinterpretation and Human Response:

Perhaps the biggest risk is how humans use this information. The Frontiers in Ethology study highlighted a concerning trend: even when participants correctly recognized that a cat was in a negative state, a significant proportion still indicated they would engage in high-risk interactions with the animal . If an app tells an owner their cat is “angry,” but the owner chooses to ignore that warning and pick the cat up anyway, the technology has failed its primary purpose of improving welfare and safety.

The Future of Cat-Human Communication

The future of real-time cat emotion detection is bright, with several key trends likely to shape its development.

A. Personalized and Adaptive Models:

Future systems will likely move away from one-size-fits-all models. Owners will be able to train the AI on their specific cat, allowing it to learn the unique quirks of their pet’s behavior and vocalizations. This “owner-driven model tuning” will dramatically improve accuracy over time .

B. Proactive Welfare Alerts:

Instead of just telling an owner that a cat is “stressed” in the moment, future systems will use historical data to predict and prevent negative states. If a cat consistently shows signs of anxiety at 3:00 PM every day, the system could alert the owner to a potential environmental stressor (like a delivery person or a neighbor’s dog) that they were previously unaware of .

C. Two-Way Communication Interfaces:

Projects like FeelFur envision systems that not only detect emotions but also allow for remote interaction. Imagine a scenario where the system detects your cat is lonely. You could trigger a voice interaction feature, allowing you to speak to your cat through a smart speaker, or even activate an automated toy to provide enrichment .

D. Integration into Veterinary Medicine:

Automated emotional estimation technology has immense potential in clinical settings. It could be used for continuous, objective pain assessment in hospitalized animals, helping veterinarians provide more timely and effective pain relief. As the review paper from Tohoku University suggests, this technology can contribute to “pet welfare enhancement, veterinary care, and ethology” .

Conclusion

The quest to understand our feline friends is as old as domestication itself. Today, that quest is being transformed by the power of artificial intelligence. Real-time cat emotion detectors, powered by deep learning, computer vision, and acoustic analysis, are evolving from a science-fiction fantasy into a tangible reality.

While these tools are not magical translators that will unlock philosophical debates with your cat, they represent a monumental step forward in animal welfare. By providing an objective, real-time window into a cat’s emotional state detecting subtle signs of pain, fear, or contentment that humans often miss they empower owners and veterinarians to respond more effectively to an animal’s needs.

From platforms like Anima and FeelFur that offer multi-modal analysis, to foundational research in facial expression and vocalization recognition, the technology is rapidly maturing . Challenges remain, including skepticism from experts, breed variability, and the risk of human misuse. However, the direction is clear: we are moving towards a world where the bond between humans and cats is strengthened not by trying to make cats talk like people, but by using technology to finally listen to what they have been trying to tell us all along. The next time your cat meows at 4:00 a.m., you might not just hear a sound you might finally understand its meaning.